Data pipelines are not just an upgrade to ETL processes but a transformative approach that equips businesses to be future-ready.

This article is sponsored and originally appeared on Ascend.io.

In the modern world of data engineering, two concepts often find themselves in a semantic tug-of-war: data pipeline vs ETL. In the early stages of data management evolution, ETL processes offered a substantial leap forward in how we handled data – they provided a structured, systematic way to move data from one place to another, transforming it along the way to fit specific needs.

Fast forward to the present day, and we now have data pipelines. These are more advanced, more complex, and more capable, offering greater flexibility, and automation. However, they are not just an upgraded version of ETL. They are different tools with different capabilities, designed to handle different tasks.

This article delves into the “data pipeline vs ETL” discussion, clearly defining their differences and similarities, and why understanding these nuances is critical to an effective data strategy.

Before we explore the differences between the ETL process and a data pipeline, let’s acknowledge their shared DNA. Data ingestion, transformation, and sharing act as the underlying structure, or the skeleton if you will, upon which the rest of their functionality is built.

Data ingestion is the first step of both ETL and data pipelines. In the ETL world, this is called data extraction, reflecting the initial effort to pull data out of source systems.

If you’ve used ETL tools like Informatica, Talend, Fivetran, or Stitch before, you’re familiar with the need to connect to source systems to acquire data for analytics purposes. The data sources themselves are not built to perform analytics. Instead, they run business processes, such as running shopping apps, embedding advertising in media content, or running a bank. Data extraction is simply concerned with pulling data out of them with minimal disruption and maximum fidelity. ETL tools usually pride themselves on their ability to extract from many variations of source systems.

In the data pipeline world, this step is called data ingestion, reflecting the perspective that it’s just the first step in a series of operations that constitute a network of data pipelines. Yet, the technical problem is the same.

Because of the many variations of source systems, the data collected during the ingestion phase is often raw, messy, and unstructured. In the ETL world, data transformation is intended to change the structure of the source data to match a specific target database schema, usually in the context of a data warehouse. The tools are designed to simply close this gap, sometimes even in a simple 1-source to 1-target approach.

In the data pipeline world, the transformation process is also used to clean the source data, combine it, and restructure it. However, data pipeline transformations are far more adaptable, and frequently use networks of operations to extract valuable information, filtering for errors, aggregating and distilling data into insights, and arranging data into multiple formats that are useful for different kinds of later analysis.

After the transformations, the resulting processed datasets are shared for further analysis and visualization. In the ETL world, this is called data loading, and it is typically into a data warehouse. If the datasets are needed in a different type of destination, like an application or an API, an additional reverse ETL tool is used to load it there.

In the data pipeline world, the cleaned, organized datasets are shared in as many different formats and systems as needed to create new business value. The pipeline endpoint can even be queried directly by data scientists, analysts, and business users.

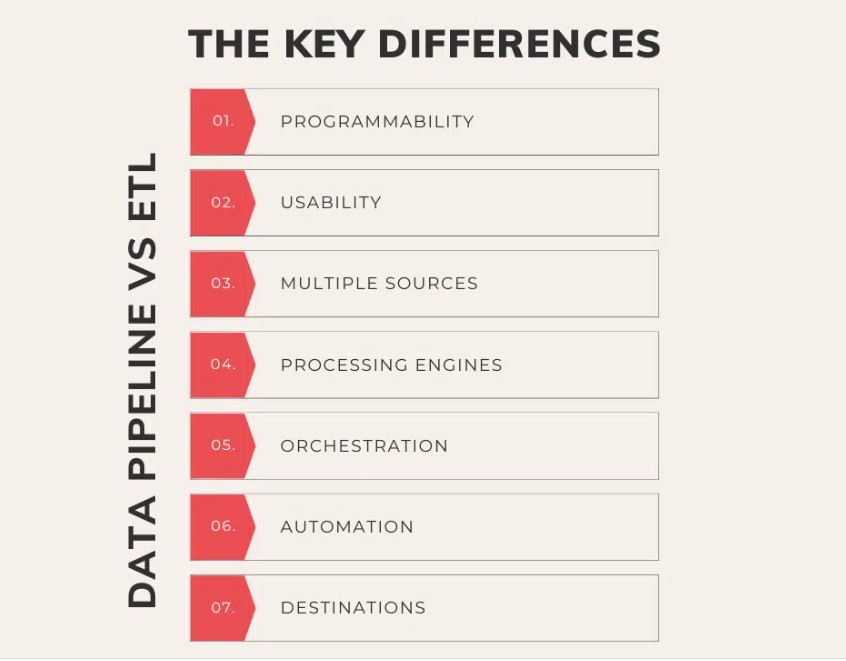

While ETL and data pipelines share common processes, they diverge significantly in their capabilities and attributes. Let’s delve into the distinct differences that set them apart and resolve the data pipeline vs ETL dichotomy.

In the context of data processing, programmability refers to the ability to customize and write complex operations. ETL tools, while efficient and capable of performing many tasks, often come with a fixed set of predefined functions and lack flexibility. They are designed to follow a rigid, linear process of ingestion and transformation, and sharing data is often limited to a single predefined destination.

Data pipelines, conversely, are far more programmable. They enable the construction of complex networks of data workflows, with more granular control over the data operations and the ability to write custom code. This flexibility makes data pipelines suitable for the more diverse data scenarios common in modern data-driven business use cases.

When it comes to data tools, usability refers to how user-friendly and intuitive they are for a broad diversity of data skills. Many ETL tools are designed for SQL-centric users and are limited in terms of more advanced data engineering and automation control. Users configure settings manually and use visual drag-and-drop interfaces to build the transformation logic. Third-party tools are often necessary for monitoring, alerting, and orderly orchestration of operations.

Data pipelines on the other hand are designed to leverage APIs as well as UIs to natively program, organize, and sequence the processing logic. They often incorporate features like scriptable configurations, intelligent error handling, and automated notifications, which make them easier and more efficient to use.

ETL tools are typically designed to handle data from a limited number of predefined sources. This can be restrictive, particularly in modern data environments where data can be located across many different types of on-premises and cloud-based sources.

On the other hand, data pipelines are designed to not only ingest data from the usual systems, but also be highly programmable to handle any type of data source. They also integrate data into a single pipeline network from many sources at once, irrespective of their location or format.

ETL tools come in two flavors: one flavor has native processing engines that are designed for serial batch processing. They typically have limited capacity and, once a large job has started, all others behind it have to wait for it to finish. In the case of failures, the jobs are simply rerun in the same order, leading to delays for all users. The second flavor is simply visual query-building tools that combine the query logic of the transformations and send it to the data warehouse to run. They are only limited by the capacity of the data warehouse, but have no intelligence about how those massive jobs are performing.

Data pipelines, on the other hand, divide the work of actively sequencing and managing the individual transformation jobs with advanced processing engines, and processing the jobs themselves in a variety of cloud infrastructures. This architecture is massively scalable for modern data volumes, reduces running costs, and enables the use of processing engines suited for each workflow.

While ETL tools are able to manage sequences of ingest, transform, and share operations, they often lack real orchestration capabilities. This means that they are limited to basic sequences of transformation operations, usually within their own engine or the data warehouse they run on.

In contrast, data pipelines can coordinate these tasks across entire networks of pipelines, and can operate in complex data environments across multiple types of infrastructure. This includes not only the ingestion, transformation, and sharing of data, but also error handling, reruns, data quality checks, and more. Data pipelines ensure that each task is executed in the right order, at the right time, and under the right conditions.

ETL tools typically require considerable manual setup, constant monitoring, and ongoing operational intervention to run the workloads and jobs. Automation in the ETL world is usually limited to basic scheduling.

Data pipelines, however, are designed with a high degree of automation in mind. They can be configured to automatically run workloads end-to-end, tracking lineage as they ingest data from multiple sources, transform it, and share it to the destinations. Moreover, they can automatically handle errors, recover from failures and rerun tasks if necessary, and send notifications when certain conditions are met. This automation enforces data quality rules, generates complete logs of every action, reduces the need for manual operations, and enhances the efficiency and reliability of data pipelines.

ETL tools are typically designed to load data into a single, predefined destination, such as a data warehouse, from which all other uses are drawn. This leads to a restrictive centralized architecture, and incurs additional processing costs to reformat and utilize the data in multiple places.

Data pipelines on the other hand are designed to deliver data to multiple destinations in multiple formats simultaneously. This could include various databases, data warehouses, reverse ETL to applications, or even other data pipelines. This way data can be used more dynamically without compromising its integrity, and across multiple platforms at lower cost, fueling a wider range of use cases and insights.

As should be apparent by now, data pipelines offer distinct advantages over the traditional ETL approach. These differences lead to advantages in how data teams operate, and raise their productivity and impact on the business value they generate. These are the advantages between data pipeline vs ETL:

The modern data stack is laden with a multitude of tools and technologies, each with its own learning curve and complexities. Given its limitations, ETL is just one of these tools — and requires integrating with multiple others to begin to address the complex data needs of modern businesses.

Data pipelines, on the other hand, inherently come with many more native capabilities that can reduce the need for additional specialty tools, eliminating integrations and simplifying your data tech stack. This not only reduces the complexity of your data environment but also eases the learning curve for your data team.

A majority of businesses report bottlenecks in their ETL staffing caused by the scarcity of data analytics talent. Data pipelines, with their superior usability and automation, can alleviate this bottleneck. Not only do they enable a broader range of professionals like data analysts, data scientists, and other professionals to contribute, but they also raise the productivity of the core data engineering teams by eliminating routine maintenance and data housekeeping.

Data pipelines increase transparency in data operations with real-time monitoring of dataflows, making it easier to track and audit data processes, and detect and troubleshoot problems. This enhanced visibility drives business participation as stakeholders can validate the accuracy of their data pipelines and understand how data is moving across the network of pipelines — leading to better stewardship and collaboration between business and technical teams.

As more organizations move towards a data mesh architecture where data is decentralized and domain-focused, data pipelines become a requirement to get the right data product to the right teams at the right time. Data pipelines provide flexibility in drawing data from multiple sources across the business and delivering data products across domains in as many formats as each of them need — enabling seamless operations in a data mesh environment.

In the rapidly evolving data engineering landscape, understanding the tradeoffs in the choice between ETL tools and data pipelines is crucial. While ETL has its uses, data pipelines have emerged as a more versatile solution for today’s complex data needs. Data pipelines offer the enhanced flexibility, automation, and scalability essential in today’s data-driven businesses.

They can handle far more diverse data scenarios, access and acquire data from more sources, deliver data to multiple destinations in many formats, and scale the processing of data across multiple types of infrastructure without requiring massive new staffing investments.

In conclusion, data pipelines are not just an upgrade to ETL processes but a transformative approach that equips businesses to be future-ready. They are a compelling choice for any organization looking to leverage its data assets effectively in the modern business landscape.

Property of TechnologyAdvice. © 2026 TechnologyAdvice. All Rights Reserved

Advertiser Disclosure: Some of the products that appear on this site are from companies from which TechnologyAdvice receives compensation. This compensation may impact how and where products appear on this site including, for example, the order in which they appear. TechnologyAdvice does not include all companies or all types of products available in the marketplace.