End-to-end data pipelines serve as the backbone for organizations aiming to harness the full potential of their data.

This article is sponsored and originally appeared on Ascend.io.

A star-studded baseball team is analogous to an optimized “end-to-end data pipeline” — both require strategy, precision, and skill to achieve success. Just as every play and position in baseball is key to a win, each component of a data pipeline is integral to effective data management.

In baseball, each player’s role, whether it’s batting, fielding, or pitching, contributes to the outcome of the game. Similarly, in data, every step of the pipeline, from data ingestion to delivery, plays a pivotal role in delivering impactful results. In this article, we’ll break down the intricacies of an end-to-end data pipeline and highlight its importance in today’s landscape.

An end-to-end data pipeline is a set of data processing steps that manages the flow of data from its ingestion to its final destination, all within a single pane of glass. Unlike fragmented data pipelines that rely on multiple tools and platforms for different steps, the end-to-end approach streamlines the entire process.

Relying on multiple tools for various steps introduces miscommunication and inefficiencies. The end-to-end approach, on the other hand, ensures a continuous flow of data and removes the intricacies of navigating disparate tools.

This synergy promotes efficiency, precision, and agility in data operations, paving the way for rapid insights and informed decisions. Analogous to baseball, where coordinated teamwork and tactical strategies culminate in dynamic, game-changing plays, an end-to-end data pipeline orchestrates data processes with precision, giving timely and impactful results.

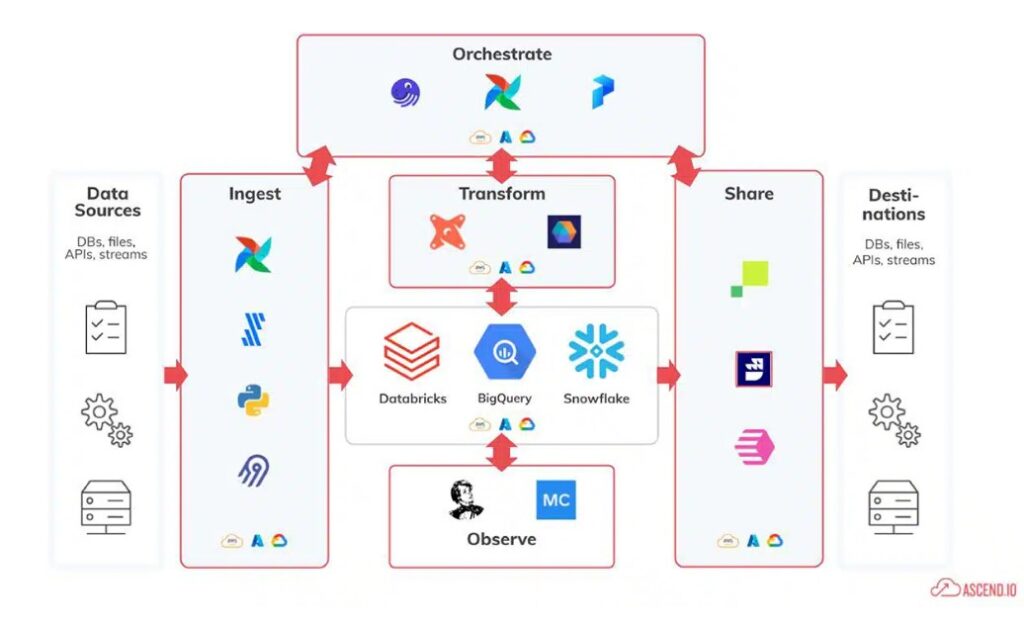

A visual maze: The tangled web of disparate tools commonly used in fragmented data pipelines.

Here are the central stages of an end-to-end data pipeline:

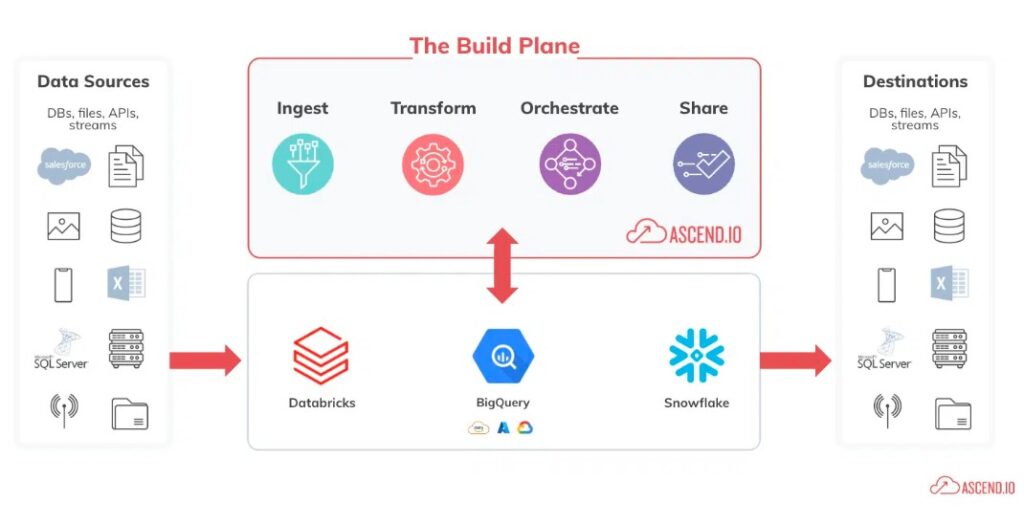

Unified Vision: The streamlined simplicity of an end-to-end data pipeline on a single platform.

In today’s data-driven landscape, the methodology employed in managing and processing data can be a pivotal factor for business success. End-to-end data pipelines have emerged as an integral component, and here’s why:

A fragmented data approach can lead to missed insights, slower decision-making, and operational inefficiencies. An end-to-end data pipeline aligns every component for optimal performance, ensuring businesses not only stay in the game but also consistently hit those home runs.

Imagine the chaos of a baseball team where each player trains with a different coach, follows a unique playbook, and communicates in a different language. The pitcher, catcher, outfielders, and basemen, all crucial to the game, would struggle to coordinate their actions, leading to missed opportunities and glaring errors on the field. Similarly, using disparate tools for each stage of a data pipeline is a recipe for misalignment, inefficiencies, and missed data opportunities.

That’s where we come in. We wrap every single stage in a data pipeline into one neat package. But our value proposition doesn’t stop there. A notable feature of Ascend’s end-to-end data pipelines is our advanced data pipeline automation capabilities. Such automation guarantees that data is processed, updated, and relayed consistently and efficiently. It’s a win-win: while reducing human intervention and potential errors, it paves the way for scalability.

Whether it’s accommodating growing data volumes or evolving business requirements, our automated end-to-end pipelines can accommodate these changes, processing larger datasets efficiently and maintaining their performance.

The importance of efficient, reliable, and cohesive data management cannot be understated. End-to-end data pipelines serve as the backbone for organizations aiming to harness the full potential of their data. By consolidating the entire data process within a single framework, businesses can eliminate inefficiencies, reduce errors, and ensure that data flows smoothly from ingestion to final analysis.

The advantages, ranging from enhanced collaboration and consistency to automation and scalability, are compelling. As you navigate the complexities of the data landscape, remember that an end-to-end data pipeline is not just a tool — it’s your MVP, ready to lead your organization to victory in the competitive, data-centric landscape.

Related Reading and Resources

Property of TechnologyAdvice. © 2026 TechnologyAdvice. All Rights Reserved

Advertiser Disclosure: Some of the products that appear on this site are from companies from which TechnologyAdvice receives compensation. This compensation may impact how and where products appear on this site including, for example, the order in which they appear. TechnologyAdvice does not include all companies or all types of products available in the marketplace.